OX HE Tutorial 1M

Tutorial: High Available OX HE Setup for up to 1 Milion users

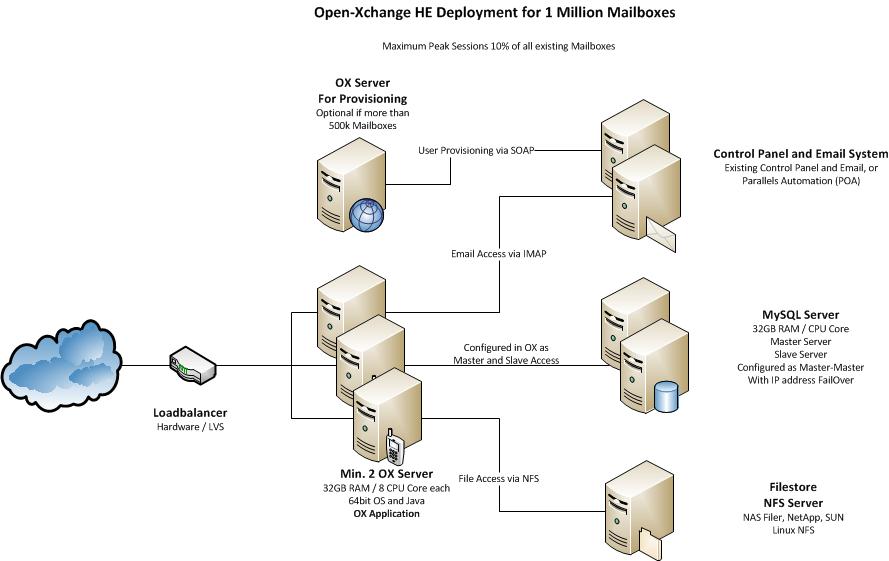

This article describes what you need for a typical OX HE Setup for up to 1.000.000 Users, which is fully clustered, high available and scaling very flexible.

It contains everything you need to:

- Understand the design of the OX HE setup including additional services

- Install the whole system based on the relevant articles

- Find pointers to the next steps of integration

System Design

The system is designed to provide maximum functionality and availability with a minimum of necessary hardware. If the services on one OX server fail, this is transparently handled by the load balancer. If one MySQL server fails, it is sufficient to take over the IP address on the other MySQL server in the cluster to stay fully in operation.

Core Components for OX HE

- Minimum two (recommended three) OX HE servers (HW recommendation: 32GB RAM / 8 cores each)

- Minimum one MySQL cluster with two servers in Master-Master configuration (HW recommendation: 32GB RAM / 8 cores each)

- NFS Server to store documents and files

- Recommended for more than 500.000 mailboxes: one OX HE server dedicated for user provisioning (HW recommendation: 16GB RAM / 4 cores each)

Infrastructure Components not delivered by OX

- An email system providing IMAP and SMTP

- A control panel for creation and administration of users

- A Load Balancer in front of the OX servers (optional, recommended)

Overview Installation Steps

To deploy the described OX setup, the following steps need to be done.

Mandatory Steps

- Initialize and configure MySQL database servers

- Install and configure OX on all servers

Steps depending on your environment

- Implement Load Balancer

- Connect Control Panel

- Connect Email System

Recommended Optional Next Steps

- Automated Frontend Tests

- Upsell Plugin

- Mobile Autoconfiguration

- Automatic FailOver

- Branding

Mandatory Installation Steps - Instructions & Recommendations

The following steps need to be done in every case to get OX up and running:

Initialize and configure MySQL database on both servers

MySQL will be configured as Master-Master configuration to ensure data consistency on both servers. If one machine fails, the other machine will take over all functionality.

Overview

You can choose between Galera or Master/Slave replication. We like to recommend to use Galera for higher redudancy, easier operations, und synchronous semantics (so you can run OX without our "replication monitor"). For POC or demo setups, a single standalone database setup might be sufficient.

Standalone database setup

Preparations

Our configuration process includes wiping and reinitializing the datadir. This is usually not a problem in a fresh installation. If you want to upgrade an existing database server, please be prepared to wipe the datadir, i.e. take a mysqldump for later restoration into the properly configured master.

mysqldump --databases configdb oxdb_{5..14} > backup.sql

Be sure to verify the list of databases.

Installation

Note: the following list is not an exclusive list or authorative statement about supported MySQL flavors / versions. Please consult the official support / system requirements statement.

Please follow the upstream docs for your preferred flavor to get the software installed on your system.

- MariaDB (10.1, 10.2): https://downloads.mariadb.org/

- Oracle MySQL Community Server (5.6, 5.7): https://dev.mysql.com/downloads/mysql/

Make sure to doublecheck the service is not running (or stop it) after installation as we need to perform some reconfigurations.

service mysql stop

Configuration

MySQL configuration advise is given in our MySQL configuration article. Please consult that page for configuration information and create configuration files as described there.

Some settings we recommend to change require that the database gets re-initialized. We assume you don't have data there (since we are covering a fresh install) or you have taken a backup for later restore as explained above in the Preparations section.

cd /var/lib/ mv mysql mysql.old.datadir mkdir mysql chown mysql.mysql mysql # mariadb mysql_install_db # mariadb 10.2 mysql_install_db --user=mysql # oracle 5.6 mysql_install_db -u mysql # oracle 5.7 mysqld --initialize-insecure --user=mysql

(Don't be worried about the insecure, it just means we set the db root pw in the next steps.)

Start the service. The actual command depends on your OS and on the MySQL flavor.

service mysql start

Run mysql_secure_installation for a "secure by default" installation:

mariadb-secure-installation

That tool will ask for the current root password (which is empty by default) and subsequently questions like:

Change the root password? [Y/n] Remove anonymous users? [Y/n] Disallow root login remotely? [Y/n] Remove test database and access to it? [Y/n] Reload privilege tables now? [Y/n]

You should answer all these questions with "yes".

Configure a strong password for the MySQL root user.

The further steps in this guide omit -u -p arguments to the MySQL client. Rather than passing them on the command line [1] it is recommended to place the credentials in a file like /root/.my.cnf like

[client] user=root password=wip9Phae3Beijeed

Make sure the service is enabled by the OS's init system. The actual command depends on your OS and on the MySQL flavor.

systemctl enable mysql.service

You should now be able to restore your previously taken backup.

# If you took a dump for restore before mysql < backup.sql

Configure OX to use with a standalone database

Not much special wisdom here. OX was designed to be used with master/slave databases, and a standalone master works just as well, if we register it as a master, and not registering a slave.

For the ConfigDB, configdb.properties allows configuration of a writeUrl (which is set to the correct values if you use oxinstaller with the correct argument --configdb-writehost).

The single database is then used for reading and writing.

For the individiual UserDBs, use registerdatabase -m true.

Galera database setup

Preparations

Our configuration process includes wiping and reinitializing the datadir. This is usually not a problem in a fresh installation. If you want to upgrade an existing database to Galera cluster, please be prepared to wipe the datadir, i.e. take a mysqldump for later restoration into the properly configured master.

Depeding on the flavor of the current database, this can be something like

# mariadb or oracle mysql without GTIDs

mysqldump --databases configdb oxdb_{5..14} > backup.sql

# mysql 5.6 with GTIDs... we dont want GTIDs here

mysqldump --databases --set-gtid-purged=OFF configdb oxdb_{5..14} > backup.sql

Be sure to verify the list of databases.

Installation

Please follow the upstream docs for your preferred flavor to get the software installed on your system.

- Percona XtraDB Cluster (5.6, 5.7): https://www.percona.com/doc/percona-xtradb-cluster/LATEST/install/index.html

- MariaDB Galera Cluster (10.0, 10.1): https://mariadb.com/kb/en/library/getting-started-with-mariadb-galera-cluster/ (Note: with 10.0, socat is required, but not a package dependency, so you need to explicitly install also socat)

Make sure to doublecheck the service is not running (or stop it) after installation as we need to perform some reconfigurations.

service mysql stop

Configuration

Galera-specific MySQL configuration advise is included in our main MySQL configuration article. Please consult that page for configuration information.

That page suggests a setup were we add three custom config files to /etc/mysql/ox.conf.d/: ox.cnf for general tuning/sizing, wsrep.cnf for clusterwide galera configuration, and host.cnf for host-specific settings.

Adjust the general settings and tunings in ox.cnf according to your sizing etc.

Adjust wsrep.cnf to reflect local paths, cluster member addresses, etc.

Adjust host.cnf to give node-local IPs, etc.

Version-specific hints:

# percona 5.6: unknown variable 'pxc_strict_mode=ENFORCING' ... unset that one # mariadb 10.1: add wsrep_on=ON # mariadb 10.0 and 10.1: set wsrep_node_incoming_address=192.168.1.22:3306 in host.cnf, otherwise the status wsrep_incoming_addresses might not be shown correctly(?!)

Some settings we recommend to change require that the database gets re-initialized. We assume you don't have data there (since we are covering a fresh install) or you have taken a backup for later restore as explained above in the Preparations section.

cd /var/lib/ mv mysql mysql.old.datadir mkdir mysql chown mysql.mysql mysql # mariadb 10.0 and 10.1 mysql_install_db # mariadb 10.2 mysql_install_db --user=mysql # percona 5.6 mysqld --user=mysql # percona 5.7 mysqld --initialize-insecure --user=mysql

(Don't be worried about the insecure, it just means we set the db root pw in the next steps.)

Cluster startup

Typically on startup a Galera node tries to join a cluster, and if it fails, it will exit. Thus, when no cluster nodes are running, the first cluster node to be started needs to be told to not try to join a cluster, but rather bootstrap a new cluster. The exact arguments vary from version to version and from flavor to flavor.

First node

So we initialize the cluster bootstrap on the first node:

# percona 5.6, 5.7 service mysql bootstrap-pxc # mariadb 10.0 service mysql bootstrap # mariadb 10.1, 10.2 galera_new_cluster

Run mysql_secure_installation for a "secure by default" installation:

mysql_secure_installation

The further steps in this guide omit -u -p arguments to the MySQL client. Rather than passing them on the command line [2] it is recommended to place the credentials in a file like /root/.my.cnf like

[client] user=root password=wip9Phae3Beijeed

We need a Galera replication user:

CREATE USER 'sstuser'@'localhost' IDENTIFIED BY 'OpIdjijwef0'; -- percona 5.6, mariadb 10.0 GRANT RELOAD, LOCK TABLES, REPLICATION CLIENT ON *.* TO 'sstuser'@'localhost'; -- percona 5.7, mariadb 10.1, 10.2 GRANT PROCESS, RELOAD, LOCK TABLES, REPLICATION CLIENT ON *.* TO 'sstuser'@'localhost'; FLUSH PRIVILEGES;

(Debian specific note: MariaDB provided startup scripts use the distro's mechanism of verifying startup/shutdown using a system user, so we create that as well:

# mariadb 10.0, 10.1, 10.2 GRANT ALL PRIVILEGES ON *.* TO "debian-sys-maint"@"localhost" IDENTIFIED BY "adBexthTsI5TaEps";

If you do this, yo need to synchronize the /etc/mysql/debian.cnf file from the first node to the other nodes as well.)

Other nodes

On the other nodes, we only need to restart the service now, to trigger a full state transfer from the first node to the other nodes.

We recommend to do this serially to let one state transfer complete before the second state transfer.

First node (continued)

Only applicable if you used galera_new_cluster before rather than the service script: In order to get the systemctl status consistent, restart the service on the first node:

# mariadb 10.1, 10.2: restart the service so that the systemctl status is consistent mysqladmin shutdown service mysql bootstrap

Verify the replication

The key tool to verify replication status is

mysql> show status like "%wsrep%";

This will give a lot of output. You want to verify in particular

+------------------------------+--------------------------------------+ | Variable_name | Value | +------------------------------+--------------------------------------+ | wsrep_cluster_size | 3 | | wsrep_cluster_status | Primary | | wsrep_local_state | 4 | | wsrep_local_state_comment | Synced | | wsrep_ready | ON | +------------------------------+--------------------------------------+

You can also explicitly verify replication by creating / inserting DBs, tables, rows on one node and select on other nodes.

Troubleshooting

The logs are helpful. Always.

Common mistakes are listed below.

If the Galera module does not get loaded at all:

- Configuration settings in my.cnf which are incompatible to Galera

- Wrong path of the shared object providing the Galera plugin in wsrep.cnf (wsrep_provider)

If the first node starts, but the second / third nodes can not be added to the cluster:

- User for the replication not created correctly on the first Galera node

- SST fails due to missing / wrong version prerequisite packages (not everything is hardcoded in package dependencies -- make sure you got percona-xtrabackup installed in the correct version, and also socat). If SST fails, do not only look into mysqls primary error logs, but also into logfiles from the SST tool in /var/lib/mysql on the donor node.

Notes about configuring OX for use with Galera

Write requests

Open-Xchange supports Galera as database backend only in the configuration where all writes are directed to one Galera node. For availability, it makes sense to not configure one Galera node's IP address directly, but rather employ some HA solution which offers active-passive functionality. Options therefore are discussed below.

Read requests

Read requests can be directed to any node in the Galera cluster. Our standard approach is to recommend to use a loadbalancer to implement round-robin over all nodes in a Galera cluster for the read requests. But you can also chose to use a dedicated read node (the same node, or a different node, than the write node). Each of the approaches has its own advantages.

- Load balancer based setup: Read requests get distributed round-robin between the Galera nodes. Theoretically by distributing the load of the read requests, you benefit from lower latencies and more throughput. But this has never been benchmarked yet. For a discussion of available loadbalances, see next section. OX-wise, in this configuration, you have two alternatives:

- The Galera option wsrep_causal_reads=1 option enables you to configure OX with its replication monitor disabled (com.openexchange.database.replicationMonitor=false in configdb.properties). This is the setup which seems to perform best according to our experience as turning off the replication monitor reduces the commits on the DB and thus the write operations per second on the underlying storage significantly, which outweights the drawback from having higher commit latency due to fully synchronous mode.

- Alternatively, you can run Galera with wsrep_causal_reads=0 when switching on OX builtin replication monitor. This is also a valid setup.

- Use a designated floating IP for the read requests: This eliminates the need of a load balancer. With this option you will not gain any performance, but the quantitative benefit is unclear anyhow.

- Use the floating IP for the writes also for the reads: In this scenario, you direct all database queries only to one Galera node, and the other two nodes are only getting queries in case of a failure of that node. In this case, you can even use wsrep_causal_reads=0 while still having OX builtin replication monitor switched off. However we do not expect this option to be superior to the round-robin loadbalancer approach.

Loadbalancer options

While the JDBC driver has some round-robin load balancing capabilities built-in, we don't recommend it for production use since it lacks possibilities to check the Galera nodes health states.

Loadbalancers used for OX -> Galera loadbalancing should be able to implement active-passive instances for the write requests, and active-active (round-robin) instances for the read requests. (If they cannot implement active-passive, you can still take a floating IP therefore.) Furthermore it is required to configure node health checks not only on the TCP level (by a simple connect), but to query the Galera health status periodically, evaluating Galera WSREP status variables. Otherwise split-brain scenarios or other bad states cannot be detected. For an example of such an health check, see our Clustercheck page.

Some customers use loadbalancing appliances. It is important to check that if the (virtual) infrastructure offers "loadbalancer" instances that they satisfy the given requirements. Often this is not the case. In particular, a simple "DNS round robin" approach is not viable.

LVS/ipvsadm/keepalived

If you want to create your own loadbalancers based on Linux, we usually recommend LVS (Linux Virtual Servers) controlled by Keepalived. LVS is a set of kernel modules implementing a L4 loadbalancer which performs quite well. Keepalived is a userspace daemon to control LVS rules, using health checks to reconfigure LVS rules if required. Keepalived / LVS requires one (or, for availability, two) dedicated linux nodes to run on. This can be a disadvantage for some installations, but usually, it pays off. We provide some configuration information on Keepalived here.

MariaDB Maxscale

Since Maxscale has become GA in 2015, it seems to have undergone significant stability, performance and functional improvements. We are currently experimenting with Maxscale and share our installation / configuration knowledge here. It looks quite promising and might become the standard replacement for HAproxy, while we still presume Keepalived offers superior robustness and performance, coming with the cost of the requirement for one (or more) dedicated loadbalancer nodes.

HAproxy

In case where the Keepalived based approach is not feasible due to its requirements on the infrastructure, it is also possible to use a HAproxy based solution where HAproxy processes run on each of the OX nodes, configured for one round-robin and one active/passive instance. OX is then connecting to the local HAproxy instances. It is vital to configure HAproxy timeouts different from the defaults, otherwise HAproxy will kill active DB connections, causing errors. Be aware that in large installations the number of (distributed) HAproxy instances can get quite large. Some configuration hints for HAproxy are available here.

Master/Slave database setup

While we also support also "legacy" (pre-GTID) Master/Slave replication, we recommend to use GTID based replication, for easier setup and failure recovery. Support for GTID based replication has been added with OX 7.8.0.

GTID has been available since MySQL 5.6, so no 5.5 installation instructions below, sorry. We try to be generic in this documentation (thus, applicable to Oracle Community Edition and MariaDB) and point out differences where needed. Note: Instructions below include information about Oracle Community MySQL 5.7 which is not yet formally supported.

Preparations

Our configuration process includes wiping and reinitializing the datadir. This is usually not a problem in a fresh installation. If you want to upgrade an existing database to GTID master-slave, please be prepared to wipe the datadir, i.e. take a mysqldump for later restoration into the properly configured master.

Depeding on the flavor of the current database, this can be something like

# mariadb or oracle mysql without GTIDs

mysqldump --databases configdb oxdb_{5..14} > backup.sql

# mysql 5.6 with GTIDs... we dont want GTIDs here

mysqldump --databases --set-gtid-purged=OFF configdb oxdb_{5..14} > backup.sql

Be sure to verify the list of databases.

Installation

Software installation is identical for master and slave.

Please follow the instructions for installing from The vendors.

- Oracle Community Edition: https://dev.mysql.com/doc/mysql-apt-repo-quick-guide/en/

- MariaDB (10.0, 10.1): https://downloads.mariadb.org/mariadb/repositories/

Stop the service (if it is running):

service mysql stop

Configuration

Configuration as per configuration files is also identical for master and slave.

Consult My.cnf for general recommendations how to configure databases for usage with OX.

For GTID based replication, make sure you add some configurables to a new /etc/mysql/ox.conf.d/gtid.cnf file (assuming you are following our proposed schema of adding a !includedir /etc/mysql/ox.conf.d/" directive to /etc/mysql/my.cnf):

# GTID log-bin=mysql-bin server-id=... log_slave_updates = ON

Oracle Community Edition: we need to add also

enforce_gtid_consistency = ON gtid_mode = ON

(GTID mode is on by default on MariaDB.)

Use unique a server-id for each server; like 1 for the master, 2 for slave. For more complicated setups (like multiple slaves), adjust accordingly.

Since applying our configuration / sizing requires reinitialization of the MySQL datadir, we wipe/recreate it. Caution: this assumes we are running an empty database. If there is data in the database you want to keep, use mysqldump. See Preparation section above.

So, to initialize the datadir:

cd /var/lib/ mv mysql mysql.old.datadir mkdir mysql chown mysql.mysql mysql

(When coming from an existing installation, be sure to wipe also old binlogs. They can confuse the server on startup. Their location varies by configuration.)

The step to initialize the datadir is different for the different DBs:

# MariaDB 10.0, 10.1 mysql_install_db # MariaDB 10.2 mysql_install_db --user=mysql # Oracle 5.6 mysql_install_db -u mysql # Oracle 5.7 mysqld --initialize-insecure --user=mysql

(Don't be worried about the insecure, it just means we set the db root pw in the next steps.)

Then:

service mysql restart mysql_secure_installation

We want to emphasize the last step to run "secure".

Steps up to here apply to both the designated master and slave. The next steps will apply to the master.

Replication Setup

Master Setup

Create a replication user on the master (but, as always, pick your own password, and use the same password in the slave setup below):

mysql -e "CREATE USER 'repl'@'gtid-slave.localdomain' IDENTIFIED BY 'IvIjyoffod2'; GRANT REPLICATION SLAVE ON *.* TO 'repl'@'gtid-slave.localdomain';"

Now would also be the time to restore a previously created mysqldump, or add other users you need for adminstration, monitoring etc (like debian-sys-maint@localhost, for example). Adding the OX users is explained below ("Creating Open-Xchange user").

# If you took a dump for restore before mysql < backup.sql

To prepare for the initial sync of the slave, set the master read-only:

mysql -e "SET @@global.read_only = ON;"

Create a dump to initialize the slave:

# MariaDB mysqldump --all-databases --triggers --routines --events --master-data --gtid > master.sql # Oracle mysqldump --all-databases --triggers --routines --events --set-gtid-purged=ON > master.sql

Transfer to the slave:

scp master.sql gtid-slave:

Slave Setup

Configure the replication master settings. Note we don't need complicated binlog position settings etc with GTID.

Yet again DB-specific (use the repl user password from above):

# MariaDB mysql -e 'CHANGE MASTER TO MASTER_HOST="gtid-master.localdomain", MASTER_USER="repl", MASTER_PASSWORD="IvIjyoffod2";' # Oracle mysql -e "CHANGE MASTER TO MASTER_HOST='gtid-master.localdomain', MASTER_USER='repl', MASTER_PASSWORD='IvIjyoffod2', MASTER_AUTO_POSITION=1;" # https://www.percona.com/blog/2013/02/08/how-to-createrestore-a-slave-using-gtid-replication-in-mysql-5-6/ mysql -e "RESET MASTER;"

Read the master dump:

mysql < master.sql

Start replication on the slave:

mysql -e 'START SLAVE;' mysql -e 'SHOW SLAVE STATUS\G'

Master Setup (continued)

Finally, unset read-only on the master:

# on the master mysql -e "SET @@global.read_only = OFF;"

Configure OX to use with Master/Slave replication

Not much special wisdom here. OX was designed to be used with master/slave databases. For the ConfigDB, configdb.properties allows configuration of a readUrl and writeUrl (both of which are set to the correct values if you use oxinstaller with the correct arguments --configdb-readhost, --configdb-writehost).

(Obviously, the master is for writing and the slave is for reading.)

For the individiual UserDBs, use registerdatabase -m true for the masters and registerdatabase -m false -M ... for the respective slaves.

Be sure to have enabled the replication monitor in configdb.properties: com.openexchange.database.replicationMonitor=true (which it is by default); while GTID can show synchronous semantics, it is specified to silently fall back to asynchronous in certain circumstances, so synchronity is not guaranteed.

We recommend, though, to not register the databases directly by their native hostname or IP, but rather use some kind of HA system in order to be able to easily move a floating/failover IP from the master to the slave in case of master failure. Configuring and running such systems (like, corosync/pacemaker, keepalived, or whatever) is out of scope of this documentation, however.

Creating Open-Xchange user

Now setup access for the Open-Xchange Server database user 'openexchange' to configdb and the oxdb for both groupware server addresses. These databases do not exist yet, but will be created during the Open-Xchange Server installation.

Notes:

- Please use a real password.

- The IPs in this example belong to the two different Open-Xchange Servers, please adjust them accordingly.

- If using a database on the same host as the middlware (usually done for POCs and demo installations), you need to grant also to the localhost host.

- Consult AppSuite:DB_user_privileges (or grep GRANT /opt/open-xchange/sbin/initconfigdb) for an up-to-date list of required privileges. The following statement was correct as of the time of writing this section.

mysql> GRANT CREATE, LOCK TABLES, REFERENCES, INDEX, DROP, DELETE, ALTER, SELECT, UPDATE, INSERT, CREATE TEMPORARY TABLES, SHOW VIEW, SHOW DATABASES ON *.* TO 'openexchange'@'10.20.30.213' IDENTIFIED BY 'IntyoyntOat1' WITH GRANT OPTION; mysql> GRANT CREATE, LOCK TABLES, REFERENCES, INDEX, DROP, DELETE, ALTER, SELECT, UPDATE, INSERT, CREATE TEMPORARY TABLES, SHOW VIEW, SHOW DATABASES ON *.* TO 'openexchange'@'10.20.30.215' IDENTIFIED BY 'IntyoyntOat1' WITH GRANT OPTION;

Install and configure OX on both servers

OX will be installed on minimum two servers. It will be configured to write to the first MySQL database and to read from the second MySQL database in one cluster. This will distribute the load during normal operation as smooth as possible. During FailOver the IP address of the failed MySQL server will be taken over to the working server, the system stays operable.

The NFS server will be mounted on all machines and registered as filestore.

Distributed file storage

The distributed file storage will be set up on the MySQL database master server. Of course it is possible to use a dedicated file server or an already existing storage system, however this guide does not cover that. This has several reasons:

- Open-Xchange Server does not require much I/O on typical operation

- Data for groupware objects like the Infostore is stored at the file storage and file metadata is stored at the database. Consistency between the database and the file storage is critical.

Installation of the NFS server

Open-Xchange Server is able to access various storage backends, NFS (Network File System) is a mature and proven backend. Install the following packages at the MySQL master server to enable NFS storage

$ apt-get install nfs-kernel-server nfs-common portmap

Create a directory for the Open-Xchange Server file storage.

$ mkdir /var/opt/filestore

Open-Xchange Server runs as user open-xchange, create a user account at the NFS server, this is required for accessing the NFS export later. NFS will map the user id (uid) and group id (gid), therefore they need to be equal at the Open-Xchange Server nodes and the NFS server.

$ useradd open-xchange

Check the uid and gid, typically it's 1001:1001 since it's the first user on the system.

$ grep open-xchange /etc/passwd open-xchange:x:1001:1001::/home/open-xchange:/bin/sh

Make the newly created user own the filestore at the NFS server

$ chown open-xchange:open-xchange /var/opt/filestore

Configure the NFS server to provide this directory to both Open-Xchange Server nodes in read and write mode. Enter the uid and gid of the open-xchange user to the NFS export.

$ vim /etc/exports /var/opt/filestore 10.20.30.213(rw,no_subtree_check,all_squash,anonuid=1001,anongid=1001) 10.20.30.215(rw,no_subtree_check,all_squash,anonuid=1001,anongid=1001)

Make the changes effective to the running NFS server

$ exportfs -a

Installation of NFS clients

Both Open-Xchange Server machines are NFS clients since they mount the distributed file storage. It's critical that both Open-Xchange Server nodes can access the same filestorage since due to session load balancing it is possible that a user logs in to either one Open-Xchange Server.

Install required NFS client packages on both Open-Xchange Server nodes

$ apt-get install nfs-common portmap

Create mountpoints for the filestore at both Open-Xchange Server nodes

$ mkdir /var/opt/filestore/

Open-Xchange Server runs as user open-xchange, to let this user access the filestore, create a user account at all Open-Xchange Server nodes. NFS will map the user id (uid) and group id (gid) to the ones at the NFS server, therefore they need to be equal at the Open-Xchange Server nodes and the NFS Server.

$ useradd open-xchange $ grep open-xchange /etc/passwd open-xchange:x:1001:1001::/home/open-xchange:/bin/sh

Add the NFS storage to the fstab configuration file to mount the storage automatically on boot at both Open-Xchange Server nodes

$ vim /etc/fstab 10.20.30.217:/var/opt/filestore /var/opt/filestore nfs defaults 0 0

Testing the distributed file storage

Mount the filestore manually on both Open-Xchange Server nodes to check if the connection works properly

$ mount /var/opt/filestore

To test the distributed storage, create a file on one Open-Xchange Server node as user open-xchange

$ su open-xchange $ touch /var/opt/filestore/foo

Then check if the file is available and writeable at the other node also as user open-xchange

$ su open-xchange $ ls -la /var/opt/filestore $ rm /var/opt/filestore/foo

Session load balancing

Since configuration of system services for the corresponding operating system is already described in the general installation guides, this guide will focus on the specialties when creating a distributed setup. Please refer to the installation guides for configuration that is not mentioned in this guide.

The web server on this setup is a pure frontend server. This means it takes and responds to requests sent by a client but it does not contain any groupware logic. All requests are forwarded to the Open-Xchange Servers through the AJP13 protocol. The configuration will allow round-robin session load balancing, basically both Open-Xchange servers are configured as backends for answering requests with an 50:50 probability of being chosen. Once a new session is created, that session is bound to the groupware server it has been created.

For the web server we only need a very small set of packages, basically only packages that starts with open-xchange-gui where most of additional packages are languagepacks or plugins. Add the Open-Xchange software repository to the package manager configuration first. Then install the open-xchange-gui package to the web server.

$ apt-get install open-xchange-configjump-generic-gui \ open-xchange-gui open-xchange-gui-wizard-plugin-gui \ open-xchange-online-help-de \ open-xchange-online-help-en open-xchange-online-help-fr

This will install the Open-Xchange user interface, Apache 2 and several services as dependency. The Apache module proxy_ajp will handle all the communication with the Open-Xchange Servers. Its configuration also contains the setup of the session balancing. What it basically does is defining two backend nodes and forwarding servlet paths to them based on the loadfactor. This setting can be customized in case the backend servers are not equal in terms of performance. The route property is important, it specifies a unique ID of a backend server and will be used when setting up Open-Xchange Servers later. Please see the Apache mod_proxy_ajp documentation for more details.

$ vim /etc/apache2/conf.d/proxy_ajp.conf

<Location /servlet/axis2/services>

# restrict access to the soap provisioning API

Order Deny,Allow

Deny from all

Allow from 127.0.0.1

# you might add more ip addresses / networks here

# Allow from 192.168 10 172.16

</Location>

<IfModule mod_proxy_ajp.c>

ProxyRequests Off

<Proxy balancer://oxcluster>

Order deny,allow

Allow from all

# multiple server setups need to have the hostname inserted instead localhost

BalancerMember ajp://10.20.30.213:8009 timeout=100 smax=0 ttl=60 retry=60 loadfactor=50 route=OX1

BalancerMember ajp://10.20.30.215:8009 timeout=100 smax=0 ttl=60 retry=60 loadfactor=50 route=OX2

ProxySet stickysession=JSESSIONID

</Proxy>

<Proxy /ajax>

ProxyPass balancer://oxcluster/ajax

</Proxy>

<Proxy /servlet>

ProxyPass balancer://oxcluster/servlet

</Proxy>

<Proxy /infostore>

ProxyPass balancer://oxcluster/infostore

</Proxy>

<Proxy /publications>

ProxyPass balancer://oxcluster/publications

</Proxy>

<Proxy /Microsoft-Server-ActiveSync>

ProxyPass balancer://oxcluster/Microsoft-Server-ActiveSync

</Proxy>

<Proxy /usm-json>

ProxyPass balancer://oxcluster/usm-json

</Proxy>

</IfModule>

Restart the Apache 2 web server and check if it is possible to connect with a browser. By default, this configuration allows plain HTTP access. In order to offer privacy to the customer the connection must be secured by a HTTPS connection based on a valid certificate. It is also recommended to set a redirect for all plain HTTP connections to use HTTPS.

Add some required apache modules to the web server. See the general installation guides for more information about configuration of expires and deflate.

$ a2enmod proxy && a2enmod proxy_ajp && a2enmod proxy_balancer && a2enmod expires && a2enmod deflate && a2enmod headers

Restart the Apache web server after applying all configuration changes.

$ /etc/init.d/apache2 restart

Configuring Open-Xchange Server

Install all relevant Open-Xchange Server packages to both groupware nodes after adding the Open-Xchange software repository to your package manages configuration. Corresponding installation instructions for your distribution can be found here:

- Download and Installation Guide for Debian GNU/Linux 10.0 (Buster)

- Download and Installation Guide for Debian GNU/Linux 11.0 (Bullseye)

- Download and Installation Guide for RedHat Enterprise Linux 7

- Download and Installation Guide for CentOS 7

It's also possible to install backend and frontend components on each node. The difference is that a backend only on each node demands separate machines which the fronend in front of the backend nodes, while you only need a load balancer in front of the nodes if you install the backend and the frontend on each node.

Create the configdb database at the MySQL Master. This step does only need to be performed on one of the Open-Xchange Server nodes.

$ /opt/open-xchange/sbin/initconfigdb --configdb-user=openexchange --configdb-pass=secret --configdb-host=10.20.30.217

Setup the Open-Xchange Server configuration. This step needs to be performed on 'both' groupware nodes. Note that the --jkroute parameter must equal the route parameter at the web servers proxy_ajp load balancing configuration of the specific server. Node 1:

$ /opt/open-xchange/sbin/oxinstaller --servername=oxserver --configdb-readhost=10.20.30.219 --configdb-writehost=10.20.30.217 --configdb-user=openexchange --master-pass=secret --configdb-pass=secret --jkroute=OX1 --ajp-bind-port=*

Node 2:

$ /opt/open-xchange/sbin/oxinstaller --servername=oxserver --configdb-readhost=10.20.30.219 --configdb-writehost=10.20.30.217 --configdb-user=openexchange --master-pass=secret --configdb-pass=secret --jkroute=OX2 --ajp-bind-port=*

Startup the Open-Xchange Daemon on one of the nodes. Wait some seconds until it is started completely.

$ systemctl start open-xchange

Now register the Open-Xchange Server at the database. Note that a server is a whole cluster in this case. This step does only need to be performed on one of the Open-Xchange Server nodes.

$ /opt/open-xchange/sbin/registerserver -n oxserver -A oxadminmaster -P secret

Register the filestorage. This step does only need to be performed on one of the Open-Xchange Server nodes. Note that the NFS export must be mounted to the same path on both groupware nodes.

$ /opt/open-xchange/sbin/registerfilestore -A oxadminmaster -P secret -t file:///var/opt/filestore

Now register the MySQL Master database in configdb. This step does only need to be performed on one of the Open-Xchange Server nodes.

$ /opt/open-xchange/sbin/registerdatabase -A oxadminmaster -P secret --name oxdatabase --hostname 10.20.30.217 --dbuser openexchange --dbpasswd secret --master true database 4 registered

Check the returned database ID which is 4 in this case. This value is required to register the MySQL Slave database in configdb. This step does only need to be performed on one of the Open-Xchange Server nodes.

$ /opt/open-xchange/sbin/registerdatabase -A oxadminmaster -P secret --name oxdatabase_slave --hostname 10.20.30.219 --dbuser openexchange --dbpasswd secret --master false --masterid=4

Now start Open-Xchange Server on both groupware nodes.

$ systemctl stop open-xchange $ systemctl start open-xchange

Create a new context and a testuser

$ /opt/open-xchange/sbin/createcontext -A oxadminmaster -P secret -c 1 -u oxadmin -d "Context Admin" -g Admin -s User -p secret -L defaultcontext -e oxadmin@example.com -q 1024 --access-combination-name=all $ /opt/open-xchange/sbin/createuser -c 1 -A oxadmin -P secret -u testuser -d "Test User" -g Test -s User -p secret -e testuser@example.com

Test Session load balancing

Apache is configured to use a 50:50 balancing between both Open-Xchange Servers. Now that they are up and running its time to check if this balancing works. This can be done by simply watching the Open-Xchange Server log files while a user logs in. Execute tail to the open-xchange.log.0 file on both servers. Then login with the testuser, one of the servers log file should show something like

$ tail -fn200 /var/log/open-xchange/open-xchange.log.0 [...] INFO: Session created. ID: 31060fc80b9e44d38148ef4d5d19963d, Context: 1, User: 3

Then logout and login again. This time, the session should be created on the other server. On the client side, the JSESSIONID cookie at the browser shows evidence on which server the user has logged in by the trailing ".OX-" identifier. This identifier is set by Open-Xchange Server based on its AJP_JVM_ROUTE attribute.

Network configuration

Open-Xchange Server uses multicast discovery to find other nodes. Once this discovery has been successful, the groupware nodes will establish TCP connections for cache communication.

Configure a multicast address for the servers' network. This needs to be done on all groupware nodes.

$ vim /etc/network/interfaces

[...]

iface eth0 inet static

[...]

post-up route add -net 224.0.0.0/8 dev eth0

Check the Open-Xchange Servers cache configuration files /opt/open-xchange/etc/groupware/cache.ccf and /opt/open-xchange/etc/admindaemon/cache.ccf on all groupware nodes. Only the very last section is interesting for distributed caching (jcs.auxiliary.*) Make sure the TCPServers attribute is commented out and the UDPDiscovery settings are active. Also check the cache configuration for /opt/open-xchange/etc/groupware/sessioncache.ccf

# jcs.auxiliary.LTCP.attributes.TcpServers=127.0.0.1:57461 jcs.auxiliary.LTCP.attributes.TcpListenerPort=57462 jcs.auxiliary.LTCP.attributes.UdpDiscoveryAddr=224.0.0.1 jcs.auxiliary.LTCP.attributes.UdpDiscoveryPort=6780 jcs.auxiliary.LTCP.attributes.UdpDiscoveryEnabled=true

These settings configure Open-Xchange Server to discover other nodes through the multicast address 224.0.0.1 and UDP port 6780. Note that the property TcpListenerPort differs at the groupware and admindaemon configuration file. This is required to avoid socket conflicts, they define the TCP port that listens for incoming connections by other groupware nodes.

Restart the networking to enable the new multicast address on both groupware nodes. Also restart the Open-Xchange Server processes on all nodes.

$ /etc/init.d/networking restart $ /etc/init.d/open-xchange restart

Test the network settings

The new routing information for the multicast network should be available when printing the routing table.

$ route -n [...] 224.0.0.0 0.0.0.0 255.0.0.0 U 0 0 0 eth0

TCP connections that are created after the UDP multicast discovery are shown with netstat.

$netstat -tlpa | grep java | grep ESTABLISHED Proto Recv-Q Send-Q Local Address Foreign Address State tcp6 0 0 oxgw01:49103 oxgw02:57461 ESTABLISHED 3706/java tcp6 0 0 oxgw01:37912 oxgw02:57462 ESTABLISHED 3706/java tcp6 0 0 oxgw01:58849 oxgw02:49302 ESTABLISHED 3706/java tcp6 0 0 oxgw01:57462 oxgw02:46054 ESTABLISHED 3706/java tcp6 0 0 oxgw01:57462 oxgw01:41904 ESTABLISHED 3706/java tcp6 0 0 oxgw01:48628 oxgw02:57461 ESTABLISHED 3582/java tcp6 0 0 oxgw01:57461 oxgw02:47115 ESTABLISHED 3582/java tcp6 0 0 oxgw01:57461 oxgw02:57348 ESTABLISHED 3582/java tcp6 0 0 oxgw01:57461 oxgw01:42589 ESTABLISHED 3582/java tcp6 0 0 oxgw01:43960 oxgw02:57462 ESTABLISHED 3582/java tcp6 0 0 oxgw01:41904 oxgw01:57462 ESTABLISHED 3582/java tcp6 0 0 oxgw01:42589 oxgw01:57461 ESTABLISHED 3706/java tcp6 0 0 oxgw01:43786 oxgw02:57461 ESTABLISHED 3706/java tcp6 0 0 oxgw01:35196 oxgw02:58849 ESTABLISHED 3706/java tcp6 0 0 oxgw01:57462 oxgw02:44548 ESTABLISHED 3706/java tcp6 0 0 oxgw01:57461 oxgw02:44893 ESTABLISHED 3582/java

How to verify those connections? The last line shows a process id (PID) of the local process that has an established connection. In this case, PID3706 is the Open-Xchange Groupware Daemon and PID3582 is the Open-Xchange Administration Daemon. These services build mesh connections between each groupware, each admindaemon and each foldercache service. Some connections are used bidirectionally so only one connection is visible, others use two connections (inbound and outbound) depending on the network responses. It is important that each service is connected to each other while the foldercache is only connected between two groupware services. It can take some time until all connections are established after Open-Xchange Server has been started. In this example, the first two lines indicate connections between the local groupware process and the remote admindaemon and groupware processes.

You also should install and configure the OXtender for Business Mobility:

exchange active sync configuration for Open-Xchange

Installation Steps depending on your environment - Instructions & Recommendations

The following components need to be implemented in your environment.

Implement Load Balancer

A load balancer in front of the OX servers is necessary for this deployment size. It needs to handle the requests if one OX server fails.

If you already have a hardware load balancing solution in place, this can be used. OX is known to work with the standard load balancing solutions from BigIP, Barracuda, Foundry, ...

If you do not have a load balancing solution already in place, we recommend to use Keepalived as reliable and cost effective solution.

Read more about configuring Keepalived for Open-Xchange

Connect Control Panel

You need a Control Panel to create and edit users.

OX is designed to integrate into every solution you may already run in your environment and also into wide spread solutions, like the Parallels Control Panels.

If you do not run hosting services today and do not have a Control Panel in place, it is recommend to use Plesk to manage OX. With that combination you will get a full functional hosting platform containing everything you need.

Integrate your own Control Panel

If you already have a Control Panel in production, you should integrate OX with it. It is recommended to use the SOAP provisioning Interface for that purpose.

Read more about: Provisioning using SOAP

A good start to test and to understand the necessary commands are the Command Line Tools. They have exactly the same calls like the SOAP API.

Read more about: Open-Xchange CLT

Integrate with Parallels Automation (POA)

Parallels Operations Automation (POA) is an operations support system (OSS) for service providers, who want to differentiate their offerings in order to reduce customer churn and attract new customers. Additional, the APS package adds a high performance, best in class email service to Parallels Plesk Panel customers.

Authentication

To avoid password synchronization issues, it is recommended to use your existing email authentication mechanism within OX. Then you do not need to add user passwords to OX, you simply use a plugin to authenticate against your IMAP server.

Read more about the IMAP Authentication Plugin

Connect Email System

Every email system providing IMAP and SMTP can be used as backend to OX. Best experiences are made with the widespread Linux based IMAP servers Dovecot, Cyrus or Courier.

Other IMAP servers need to be tested thoroughly before going into production.

There are several possibilities to implement the Email system:

- You already have an email system available: Nothing needs to be done, it just needs to be configured

- You use Parallels Automation (POA): Nothing special needs to be done, everything you need is contained in the APS package

- You want to setup a new Email system: It is recommended to use Dovecot, as this is very stable, fast, feature rich and easy to scale

Dovecot Setup

If you want to setup a new Email system based on Dovecot, it is recommended to use NFS as storage backend and to install at least two Dovecot servers, accessing this storage. With that setup you have best scalability and high availability with a minimum of complexity and hardware.

Read more in the Dovecot documentation including a QuickConfiguration guide

Recommended Optional Next Steps

You will find plenty of additional documentation for customization of OX in our knowledge base [3]

When the main setup is completed, we recommend to start with the following articles to enhance your system and to become more attractive for your users.

Automated Frontend Tests

It is a good idea, to verify the functionality of your freshly set up and integrated system. Our QA department does that with tests, running automatically on the web frontend. We release this tests with every release and recommend you to use them to verify your environment with every update.

Read more about Automated_GUI_Tests

Monitoring / Statistics

It is recommended to implement at least a minimal monitoring/Statistics solution to get an overview of the systems health. If you have a support contract with Open-Xchange, it is very helpful, if the support can access the monitoring graphs. There are example scripts for a basic monitoring with [Munin] available.

Read more about installing and configuring Munin scripts for Open-Xchange

Upsell Plugin / Webmail Replacement

If you want to use your OX based Webmail system to upsell premium functions like full groupware functionality or like push to mobile phones, it is strongly recommended to use the in-app sales process.

Read more about Upsell

Branding

If you want OX to look more like your own Corporate Identity, including your logo, product name and maybe your colors, this can be easily achieved by changing the logos and stylesheets.

Read more about: Gui_Theming_Description

Read more about: Gui Branding Plugins

Read more about: Branding via the ConfigCascade

Backup

It is recommended to run regular backups for your OX installation. This can be done with every backup solution for Linux.

Read more about Backup your Open-Xchange installation

OX_HE_Tutorial_FailOver